Despite being the CTO of an ISP I’m not a geek. The project to install an Olympics communications infrastructure, although being highly technical, has nuances and beauties that you don’t have to be a geek to get your brain around. Read on for more information.

Despite being the CTO of an ISP I’m not a geek. The project to install an Olympics communications infrastructure, although being highly technical, has nuances and beauties that you don’t have to be a geek to get your brain around. Read on for more information.

Availability

Availability is clearly a key aspect of what has been delivered. This is a complex model though. The classic telecoms target of five nines (ie 99.999% availability of services – that’s 5 minutes or so of downtime a year) doesn’t necessarily fit the bill because it is a long term reliability measure and the infrastructure for the Olympics needs only to work for a few weeks. The unavailability of services during that period could be catastrophic.

In order to support high availability BT has taken an absolutely no risk strategy. For example all equipment being used has been on the market for at least two years. Specs for PCs, Laptops, Servers, network equipment, printers (etc) were selected during 2010 with subsequent freeze milestones for software, detailed architecture and configuration.

On a parochial note no changes have been allowed to the network in London since March 2012. This means that ISPs like Timico will not have been able to provision some new London based circuits since that time.

The term “Availability” also has different connotations depending on your perspective. Some aspects of the games are very short term: the visiting population will be highly transitory and whilst a number of venues are temporary there is a duty to provide a significant ongoing legacy with some of the facilities. In considering what kit and systems to use BT therefore needed to think about what might still be “in production” and supportable perhaps 5 or ten years after the games have ended.

Performance/Capacity

Although previous Olympic games will have been considered during the planning of London 2012 reality is that the change in our internet usage has been so great over the last few years that looking back is likely to be of limited benefit.

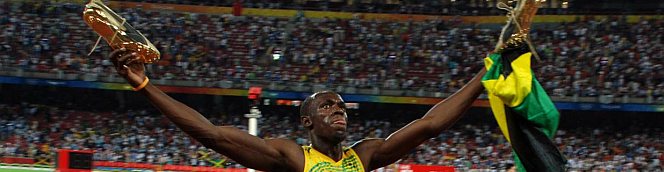

I’ve already referred to the use of social networks but changes in demand are just as likely to be affected by the introduction of new physical technologies. Professional grade digital cameras for example use up to 24.5Mpixels to produce RAW format photos of around 138Mbytes, and at 5 frames per second (Nikon D3X)!

Imagine the increased load on the network when all the photographers try uploading photos immediately after Usain Bolt has crossed the finishing line in the final of the 100m. 2.7Mbps per person suddenly doesn’t seem out of kilter.

Much modelling has gone into London 2012 – even down to the scheduling of events to control peak demand. This must be one of the few televised events that has enough clout to not be dictated to by the large TV networks (my guess – I could be very wrong).

Standardisation

Standardisation has also been a mantra. This of course allows for better purchasing power but also underwrites the need for reliability.

The consequences of this policy have meant that even if a venue already has a perfectly good communications infrastructure it has not been used. This might sound strange until you consider this would add risk, require extensive review of the adopted infrastructure and revisions to designs, test plans, implementation and operational processes, staff training and alignment of support contracts as well as resolution of management of contractual and marketing rights for sponsors who are committed to delivering for the Games. Bit of a mouthful but you can see the issues.

Scope

There are some neat numbers that make up the communications needs of the games.

There are for example up to 16,000 Cisco IP telephony handsets as well as 14,000 mobile handsets with wireless offload via 1,000 Wireless Access Points. If all of these were used at the same time then the voice traffic alone could amount to 3Gbps (30k x 100kbps assuming G711 codec). They won’t all call at the same time but we are starting to see the size of the network needed.

There are 36 competition venues and 41 training venues that need to be connected up, the latter less so I guess. BT has tried to standardise on a model for each venue though clearly some will need more infrastructure than others. Add in other venues such as admin and press and you get a total of 94 venues served by 80,000 network connections using 4,500km of internal cabling.

A network core provides mesh of connectivity between central Points of Presence (POPs), the Olympic venues and the outside world. This mesh consists of pairs of switches using Cisco’s VSS (virtual switching system) technology. Each virtual pair appears as a single logical switch capable of surviving hardware or circuit failure in its constituent systems and the network as a whole can survive the failure of an individual system.

There is more. Each VSS pair connects to two separate Ethernet links which in turn connect to 10Gbps wavelengths (2 different colours of light) on a DWDM (Dense Wave Division Multilplexing) fibre connection.

There are two wavelengths (providing 20 Gbps of capacity) between each of the Olympic Park aggregation nodes and the PoPs and twelve (providing 120 Gbps of capacity – effectively 2 x redundant 60 Gbps) between the PoPs themselves. Several physically separate routes run between the Olympic Park and the rest of the network, and also between the PoPs. Real toys for the boys stuff here.

In the Olympic Park, each venue is provided with separate dark fibre routes back to the on-park PoPs. Around 14 venues are geared to produce up to 20 Gbps of traffic.

There are a variety of options for connectivity for off-Olympic Park venues supporting the connection of around 50 locations with a potential peak aggregate traffic of a further 40 Gbps.

Each competition venue has separate Ethernet access bearers to each PoP, providing either 1 Gbps Ethernet Access Direct (EAD) or 10 Gbps Optical Spectrum Access (OSA) capacity designed to a five-nines (99.999 per cent) availability target or roughly 5 minutes of downtime a year.

Smaller non-competition venues have a choice of dual or single EAD access bearers supporting 100 Mbps or 1 Gbps capacity but with lower availability targets. For venues outside the range of BT’s standard Ethernet access products such as the Weymouth sailing venue, a hybrid solution comprises local access from the venue at 1 Gbps, connecting to the nearest BT metro nodes. From there to the core PoPs, carriage is via a 1 Gbps long-haul WDM wavelength across BT’s carrier infrastructure.

We are getting into the realms of serious network engineering speak here. We can bring the conversation back to the hard currency of the journalist or photographer covering the games.

It’s not only hotel rooms that are expensive. For the duration of the games a fixed internet connection with max 8Mbps up and down costs £150 plus VAT for the “Gold” package assuming you booked before the end of last year.

If you are a photographer and want your own dedicated connection at the spot you are crouching at for that “perfect shot” that’s £248 – on top of the hundred and fifty quid you have already paid. Oh and it’s £248 per spot so if you are flitting between 100m finishing line, the high diving and the wrestling that’s £744 plus the £150 plus VAT.

What price that photo of Usain Bolt doing his pointing?

None of this expensive capacity is necessarily available to the punters visiting the games nor would they want to pay the price.

The next Olympics post , as yet unwritten, is going to look at the infrastructure in place to support the visitor. Stay tuned…

4 replies on “Olympic planning & infrastructure put in place by BT for the “Olympic Family””

Note I’ve taken information from a number of white papers published by the Institution for Engineering and Technology in the compilation of this post. Whilst I generally try and simplify technical descriptions to make it easier reading in this case some of the spiel in the originals may well be replicated herein. I can’t specifically recall which spiel and which papers but if you find some commonality elsewhere I’d be more than happy to acknowledge the original text.

Thanks for posting this Tref. It’s a fascinating insight into the infrastructure. I’m constantly amazed by the ever growing hunger for data. I wonder how this compares with Sydney 2000, not long back. Maybe a few hundred phone lines and some decent satellite links? How things have changed.

Looking forward to the next instalment.

Don’t forget we’re also the first to deploy 100 Gihabit Ethernet into the London Internet Exchange last week 🙂 Whilst the Olympic network is impressive lots more has gone on across the whole of BT’s network.

Neil.

Ooh interesting. Sounds like a potential blog post. I’ll give you a bell in the week