I’ve been working with WebRTC for a few years now and from time to time talk in public about the technology and its potential. A pretty popular question goes along the lines of “that’s all very well, but where is the revenue in it for me?”

Around the time we did our first end-user demo of WebRTC technology making phone calls in 2012, I wrote up an article where I predicted a range of applications for the technology. Thankfully none of the fairly scary scenarios that I painted in that post have come to pass. Standardisation and browser support have been a bit slower than some of us would have liked but spite of this WebRTC has been quietly making inroads into both the traditional communications space and being used to deliver novel new applications. With the Microsoft announcement about WebRTC support in the next version of their browser, and Google’s massive strides in reliability and interoperability in the core WebRTC project, the future of the technology now looks certain.

At ipcortex we’ve worked on a number of WebRTC developments including RTCEmergency, a weekend hack last year to re-imagine the way we do emergency services calls which won a Google prize for innovation at TADHack Madrid and, with feet closer to the ground, our first major commercial WebRTC end-user application keevio which provides a full range of business Unified Communication services into any device with a WebRTC capable browser.

Amazon Mayday and other apps, do they or don’t they?

An interesting property of WebRTC is that in a really well implemented application, a user need not know or care if it is using WebRTC. It is of course really easy to tell if a web-page prompts to use the device camera or microphone in Chrome or Firefox and then delivers in-page audio and video without a 10-minute plugin dance, but for a dedicated mobile app with no web interface, it is impossible to know for sure without resorting to examining the source code or tracing interactions on the wire.

That is how the world found out that the Amazon Mayday service was using WebRTC to provide real time video chat as part of its live support service.

Other consumer communication applications that have more or less publicly adopted WebRTC to deliver real time communications over the past couple of years include:

- Comcast – streaming personal video between set-top boxes and handhelds using WebRTC

- AT&T – allowing calls to/from own mobile number via browser & API

- Google Hangouts – Google are the major force behind WebRTC development and it was seen as a bit of a coming of age for the technology when they publicly announced that their flagship hangouts product was now using it half way through 2014.

- Facebook Messenger – head over to www.messenger.com using Chrome and start an audio of video call with a Facebook friend. Your conversation just used WebRTC. That is a huge user base.

Now to some extent many of these don’t need to use WebRTC to deliver what they do; all of these companies have sufficient muscle that they could have developed dedicated applications or plugins to achieve the same functionality – albeit in a less usable way. Indeed most of the examples above still do use plugins that have been developed if you access them from a browser that doesn’t include native WebRTC support, so WebRTC is just a way of streamlining certain kinds of access.

That’s the point really, WebRTC isn’t something users care about – it should be invisible. It is the applications you create with it that have the user-visible value.

The value is in the application not the network

Whilst the simple messaging use cases for WebRTC have been early adopters, and nobody could claim that Facebook, or WhatApp are commercially insignificant, their existence has probably closed the door on making vast amounts of cash out of building a simple consumer messaging application with a bit of WebRTC voice and video thrown in.

If that is bad news for a would-be application developer, the good news is that the universal end-to-end capability that WebRTC delivers means that smart applications can still emerge which generate value by streamlining some aspect of communication.

Metcalfe’s Law (smart guy, even if he did have to eat his own words after predicting the Internet would collapse by 1997) says that the value of a telecommunication network is proportional to the square of the number of participants. This was later tweaked for social networks to be closer to n log(n) for the number of participants, but you get the point – it is a hockey stick curve where biggest network creates vast value and smaller networks have a very low comparative value. It explains why for example Fring sold a couple of years ago for a reported $50m and the WhatsApp acquisition closed out at close to $22bn, it also explains RCS’s commercial failure. It is really hard to build networks that acquire enough scale quickly enough to have significant value.

WebRTC is a bit different as, once browser and device support is complete, it builds a ready rich communication network of “everything on the Internet”. That isn’t by the way just “everything on the Internet with a screen”; we’ve put full implementations of WebRTC applications on a Raspberry Pi and strapped it under a quadcopter running off the flying machine battery (more on that later this week!).

A really important feature of building an application with WebRTC is that you get a huge potential Metcalfe’s Law advantage before you write a line of code (but so does everyone else).

Contextual vs Free Communication

So if there is vast amount of potential derived from intrinsic network size, and one class of basic social communication applications are already stitched up, where will the next killer communication applications, perhaps using WebRTC, come from?

Most new applications succeed because they are either some large factor better than what currently exists (10 times is an oft quoted number), or they solve a universally felt pain point.

Thankfully there are lots of pain points in communication, and it is relatively easy to deliver 10 times the value of a 3KHz phone call. Unlike the personal realm, where some quite good messaging tools not only exist but now dominate, business in many areas still relies pretty heavily on basic communication mechanisms.

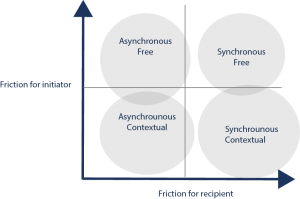

Look at how phone calls work. Users sit working on a processing task and in the middle of this a loud intrusive ringing sound comes from a plastic box on their desk. They have just a few seconds to decide whether to respond, and the only choice they have is to ignore it and lose the conversation (or even worse commit to a longer interruption to pick up a voicemail later), or pick it up and be immediately dropped into a high bandwidth synchronous communication with no context. The only information they may have about the reason it is ringing will be the name or number of the caller. We must respond immediately, context switching away from what we are doing or not at all. Depending on the job that you do, just the interruption itself, never mind the actual cost of dealing with the communication has probably cost 5-10 mins of productive work. You really wouldn’t invent a system like that from scratch today, and indeed much of the value in existing business phone systems relate to applying workarounds for these fundamental drawbacks (call queues to make interactions asynchronous for the recipient, screen popping/click to dial to give agent context etc).

Phone calls are then initiated outside of any particular context, and once started are synchronous, demanding the undivided attention of both participants.

It is far easier to initiate communication without moving away from a task flow, and with the benefit of additional context. In this way attention flows naturally and productively between communicating and processing. This is one of the important ways that WebRTC will deliver contextual communication from within other task based systems – dealing with customer support communications within the context of a support application etc. Done properly, because it is web based, this will be entirely seamless and the user will just view communication as another task experience.

Synchronous to Asynchronous

Many folks of my age are conditioned and therefore still obsessed with calling each other, but fast forward to the next generation and they pretty much exclusively run their lives far more effectively on asynchronous messaging, only escalating to realtime (usually group) voice/video when they really want to give some high bandwidth communication their undivided attention. This is way more efficient and allows interleaving and prioritising of communications and processing.

Not only will the next business communication apps be primarily contextual, if they want to remove pain points, they will also offer asynchronous communications as the norm with a simple escalation path to high bandwidth, rich synchronous communications like video and screensharing with voice.

So in summary what does all this mean to me if I’m thinking of deploying my first WebRTC based service or application?

- Don’t think of it as a “WebRTC service”. That shouldn’t be visible to your users if you do your job properly.

- A personal multimedia messaging application for free communication among your own customers is fine, but won’t set the world on fire – you will be competing with WhatsApp, Facebook, appear.in, Google etc and Metcalfe’s law is on their side (unless federation ever happens and don’t bet on that).

- If you are looking for a USP, think of integration with a key business process to either massively streamline communication or remove a pain point.

- Find a bunch of 16-25 year olds and test your application with them. If it has the key attributes of contextual and asynchronous then it will probably pass muster with them. If it doesn’t, they will wonder what planet you come from.

- Don’t think about it for too long – just get on and do it! The time for producing WebRTC toolkits, APIs, test applications and pilots was 2013, it is about delivering polished applications and services ahead of the competition now.

Previous posts from the ipcortex WebRTC week:

Welcome to ipcortex WebRTC week on trefor.net